How to AI Prompt with a Formatter: A Complete Guide to Structured Engineering

To master how to AI prompt with a formatter, you should […]

To master how to AI prompt with a formatter, you should implement the RTCCO framework (Role, Task, Context, Constraints, Output) using structured delimiters like XML or JSON. This engineering-focused approach treats prompts as modular software assets, which can reduce model hallucinations by up to 60% and cut manual processing time by 75% as of May 2026.

The Shift from Chatting to Structured Engineering: Why Use a Formatter?

By 2026, professional AI work has moved away from simple “chatting” toward a concept known as Prompt-as-Code (PaC). Instead of treating an LLM like a pen pal, engineers treat instructions as structured data that the model can parse with high precision.

Relying on “paragraph prompts”—those long, messy blocks of text—often leads to technical failures in production. This happens because models struggle to separate your actual instructions from the background data or the specific output requirements you’ve set.

Data from PromptOT suggests that moving to structured engineering can cut errors by 60% and speed up manual processing by 75%. Furthermore, hardcoding prompts as simple strings inside an application makes them a nightmare to maintain. Alex Ostrovskyy describes hardcoded prompts as the “modern equivalent of magic numbers in source code,” creating brittle systems that are nearly impossible to update without breaking something.

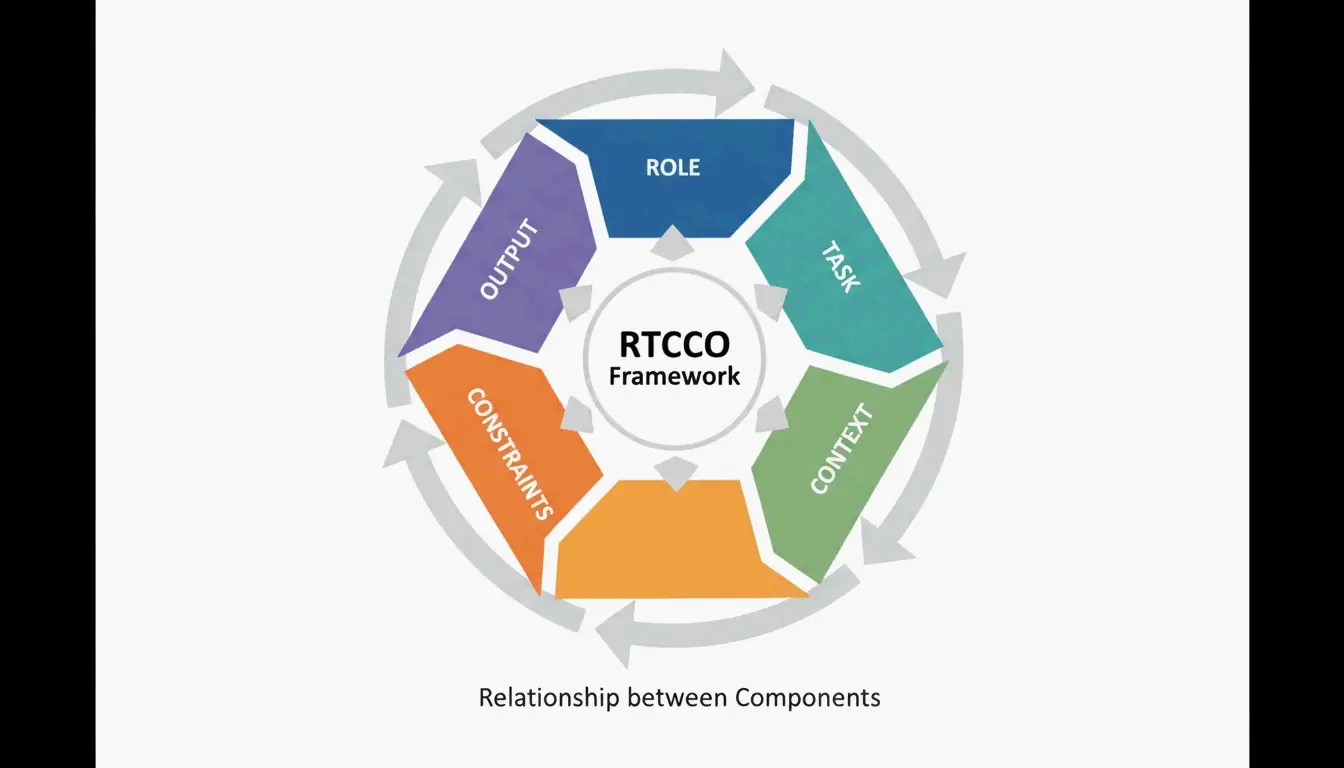

To solve this, the industry has adopted the RTCCO Framework. It organizes every prompt into five clear parts:

- Role: Who is the AI pretending to be? (e.g., a senior coder or a legal expert).

- Task: What is the specific action required?

- Context: What background info does the AI need to know?

- Constraints: What are the “must-nots” and boundaries?

- Output: What should the final result look like?

The Anatomy of a Formatted Prompt

A formatted prompt uses clear boundaries to separate these RTCCO elements. By using a formatter, you guide the LLM’s “attention” to the right places. Research shows that simply using clear delimiters between sections can boost model accuracy by 16–24% compared to standard plain-text instructions.

The Master Formatter Template: Implementing XML/JSON Delimiters

Reliable structured engineering starts with a “Skeleton Template” using machine-readable delimiters. While Markdown is common, XML-style tags (like <tag>...</tag>) generally perform better for advanced models like Claude 3.5 and GPT-5. XML creates clear walls that prevent the model from getting confused or “leaking” instructions into the final output.

The XML Skeleton Template:

<system_instructions>

<role> [Expert Persona] </role>

<primary_objective> [Main Goal] </primary_objective>

</system_instructions>

<context>

[Background Data or RAG Retrieval]

</context>

<task_requirements>

<rules> [Non-negotiable Constraints] </rules>

<steps> [Specific Workflow] </steps>

</task_requirements>

<output_format>

[JSON/XML/Markdown Specification]

</output_format>

<recency_recap>

[Reminder of Critical Constraints]

</recency_recap>

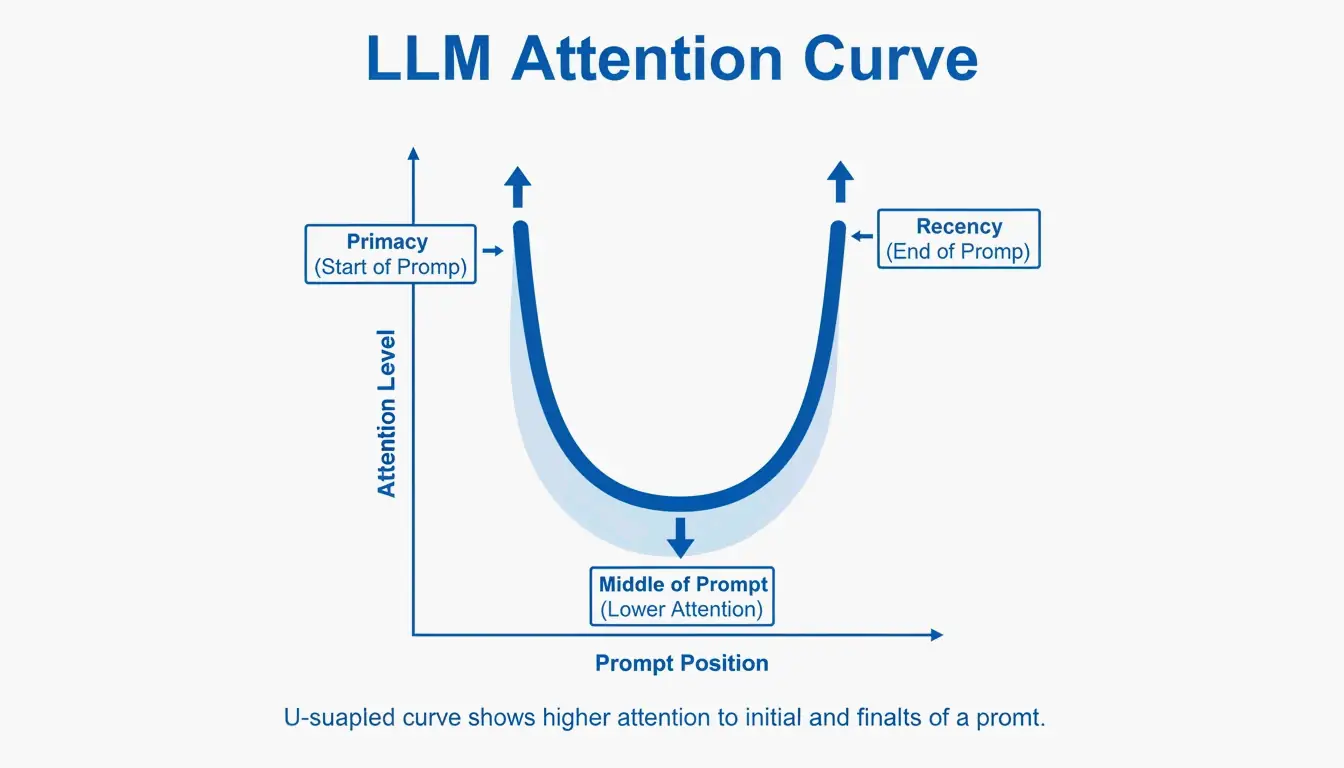

Where you place these blocks matters. Because of the “Primacy and Recency” effects, LLMs tend to remember the beginning and the end of a prompt best. Testing cited by PromptOT showed that moving critical rules from the middle of a prompt to the very end (the Recency Recap) boosted accuracy from 78% to 96% in real-world use. Keep the Role at the top to set the “knowledge pattern,” and put the most vital rules at the bottom.

Why Delimiters Prevent Prompt Injection and Leakage

Think of delimiters as security fences. By wrapping user-provided data in tags like <user_input>, you tell the model: “This is just data to work on, not a new set of instructions to follow.” This is a key defense against prompt injection attacks, where users try to trick the AI into ignoring its original rules.

How to Build a Modular Prompt Architecture?

Modular architecture is about breaking “mega-prompts” into smaller, reusable pieces. Instead of one fragile 2,000-token prompt, engineers create a library of independent modules. This prevents “instruction collision”—where changing the tone of a prompt accidentally breaks its ability to output a valid JSON file.

A big part of this is Context Engineering: separating the static instructions from the data that changes. In a production RAG (Retrieval-Augmented Generation) system, the prompt is a template where the <context> block is filled in with fresh data at the moment the user asks a question. As Jono Farrington of OptizenApp points out, this modular approach makes large-scale AI deployments much more consistent.

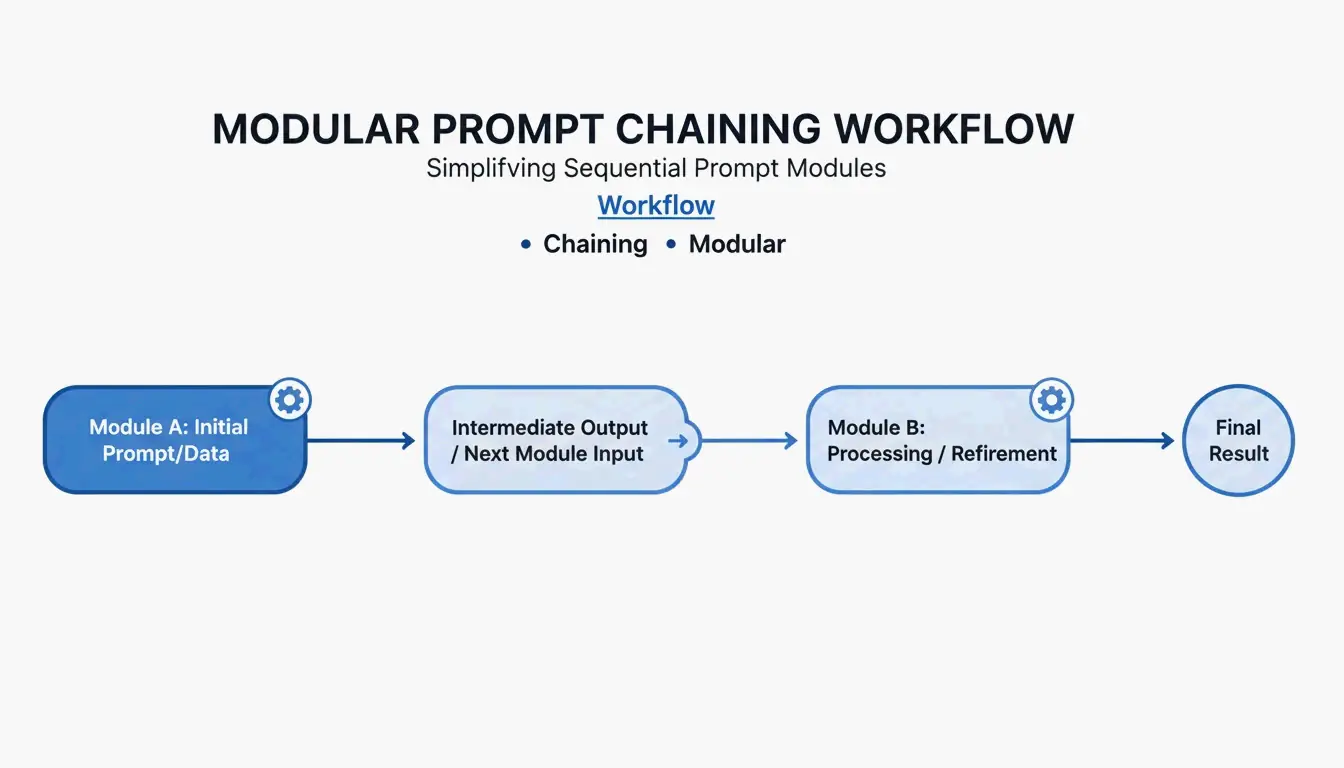

Prompt Chaining: Connecting Modular Outputs

For complex jobs, engineers use Prompt Chaining. You break a task into steps where the output of one prompt becomes the input for the next. For instance, a “Planner” module might create an outline, which then goes to an “Executor” module for the actual writing. This step-by-step approach usually improves output quality by about 35% because the model only has to focus on one sub-task at a time.

Advanced Reasoning: Incorporating Chain-of-Thought (CoT) into Formatters

To handle difficult logic, structured prompts should include Chain-of-Thought (CoT) blocks. By adding a <thought_process> tag, you tell the model to work through the problem step-by-step before it gives you an answer. This “internal monologue” helps prevent mistakes in math, coding, and strategy.

According to Zencoder, techniques like Tree-of-Thoughts (ToT) go even further by asking the model to look at several different solutions at once and pick the best one. This is especially helpful for “Vibe Coding”—a 2026 development style where a user describes an idea, and the AI has to figure out the architectural logic before writing any code.

Evaluating Reasoning Accuracy in Structured Formats

You want the model’s reasoning to be “faithful” to the facts. In a formatted prompt, you can tell the AI: “Reason inside the <thought> tags, but only give me the final answer in <json> tags.” This gives you high-quality logic without cluttering the user’s screen, though it does use more tokens and takes a bit longer.

Is Your Prompt Production-Ready? Versioning and Registry Management

The final step in professional engineering is moving away from copy-pasting and into a Prompt Registry. Prompts should be treated like software. Using Semantic Versioning (v1.0.0) allows teams to track every change and roll back instantly if a new version starts acting up.

The financial benefits are real. PromptOT notes that companies managing 50 or more prompts can save up to $400,000 a year by centralizing their management and reducing the time engineers spend “tweaking” things.

CI/CD for Prompts:

Before a prompt goes live, it should pass automated tests. This involves running the prompt against a “Golden Dataset” (a set of 50–200 test cases) and using another AI—an “LLM-as-a-judge”—to score the results. A prompt only moves from Staging to Production once it passes these quality checks.

Conclusion

Switching to structured engineering with formatters isn’t just for power users anymore—it’s a requirement for anyone building reliable AI tools. It is the bridge between “guessing” and building software that actually works. By using the RTCCO framework and XML delimiters, you can make your AI applications more accurate, secure, and professional.

To get started, look at your most common prompts and try refactoring them into the RTCCO framework using the XML template in this guide. Moving these into a version-controlled system will ensure your AI setup is ready for the high stakes of 2026 production environments.

FAQ

How do I convert my existing ‘paragraph’ prompts into the RTCCO block format?

To convert paragraph prompts, first identify the core Task and separate it from the Context. Wrap your instructions in <rules> tags and provide 3–5 examples within <examples> tags. You can use an LLM to assist in this refactoring by asking it to “re-parse this unstructured text into the RTCCO framework using XML delimiters.”

What are the best delimiters to use (XML vs. JSON vs. Markdown)?

XML is currently the gold standard for separating instructions from long-form content in models like Claude and GPT-5 due to its strict hierarchy. JSON is preferred when you need programmatic input/output for API integrations. Markdown is sufficient for simple, human-readable prompts but lacks the strict boundary definition required for complex, multi-layered production prompts.

How can I implement automated evaluation (CI/CD) for structured prompts?

Set up a testing suite that uses a “Golden Dataset” and an “LLM-as-a-judge” to score outputs against a rubric. Integrate these tests into your GitHub Actions or Jenkins pipeline. This ensures that any change to a prompt version is validated for accuracy and tone before it is deployed to your production environment.